For many creators, the early experience with generative AI feels like a high-stakes slot machine. You input a prompt, pull the lever, and hope the output aligns with the image in your mind. While this “prompt-and-hope” cycle is entertaining for hobbyists, it is fundamentally incompatible with professional production. In a commercial environment—whether you are building a social campaign, a pitch deck, or a series of video assets—predictability is the only currency that matters.

The shift from experimental play to professional output requires a transition toward a modular asset pipeline. This is a workflow where individual components of an image or video are generated, refined, and scaled using specific tools designed for high-resolution stability. At the center of this transition is the need for a foundational model that balances creative flexibility with technical rigor.

The Fragility of the Isolated Prompt

The primary bottleneck in most AI workflows is the lack of repeatability. When a creator relies solely on a single “magic prompt” to generate a final asset, they lose control over the nuances of composition, lighting, and consistency. If a client likes the character but hates the background, or if the lighting works but the resolution is too low for a 4K display, the creator is often forced to start from zero.

This fragility is why the “one-shot” approach is dying. Professional teams are moving toward a tiered system where the initial generation is just the first layer of a much larger process. In this modular approach, the creator focuses on the structural integrity of the image first. We are seeing a move away from generic prompts toward “workflow-first” thinking, where the objective is not a finished piece, but a high-quality “master” that can be manipulated in post-production.

The frustration often stems from the gap between what the AI suggests and what the brand requires. A model might generate a beautiful landscape, but if it cannot maintain that specific aesthetic across a 16:9 cinematic shot and a 9:16 social reel, it becomes a liability rather than an asset. This is where specialized engines like Nano Banana Pro AI begin to fill the gap, providing a more stable environment for those who need to generate assets that serve multiple platforms simultaneously.

Constructing the Base Layer with Nano Banana Pro

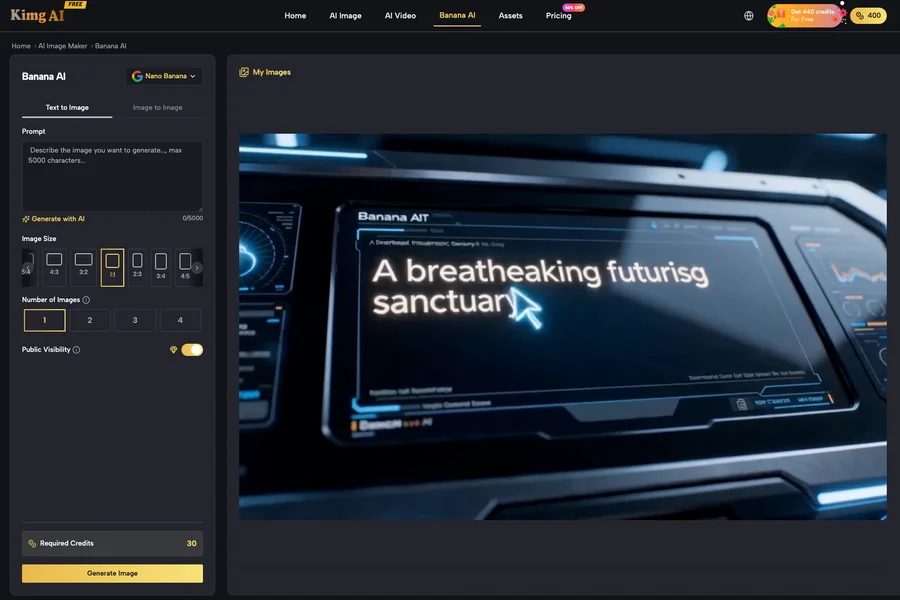

Building a repeatable pipeline begins with selecting a base model that prioritizes composition over decorative flair. When using Nano Banana Pro, the focus is on the “K-level” standard—ensuring that the raw output is clean enough to undergo further transformation without falling apart.

The base layer is where the creator defines the visual language. This involves more than just describing a subject; it requires setting the aspect ratio and the underlying aesthetic parameters that will carry through the entire project. One of the specific strengths of using Banana AI in this context is its ability to handle diverse creative demands, from text-to-image conceptualization to image-to-image refinement.

The Importance of Aspect Ratio Control

In the old workflow, creators would generate a square image and try to crop it for different platforms. In a modular pipeline, you generate for the target medium from step one. Whether it is a 21:9 cinematic wide shot or a vertical 9:16 mobile frame, starting with the correct geometry prevents the “zoomed-in” look that plagues low-effort AI content.

Maintaining Aesthetic Consistency

Consistency is the “holy grail” of AI production. If you are creating a series of assets for a brand, the blue in frame one must be the blue in frame ten. By leveraging Nano Banana Pro AI as a core engine, creators can lock in specific styles. However, it is important to note a limitation here: even with the best models, “style locking” is rarely 100% autonomous. A creator must often use image-to-image prompts to guide the AI back to the established visual identity when it begins to drift.

The K-Level Standard: Bridging Generation and Delivery

A common pitfall in AI creation is the “zoom test.” An image might look impressive on a smartphone screen but reveals significant artifacts—smudged textures, anatomical errors, or “noise”—when viewed on a desktop or in print. To bridge the gap between a raw generation and a professional-grade asset, the pipeline must include a dedicated upscaling and refinement phase.

The “K-level” standard refers to outputs that reach or exceed 1024×1024 resolution as a base, which can then be upscaled to 4K or 8K for final delivery. Using tools like the Nano Banana Pro editor, creators can perform inpainting to fix specific errors. If a hand has an extra finger or a background element is distracting, you don’t re-roll the entire prompt; you isolate the problem and regenerate that specific quadrant.

This modularity—being able to edit “inside” the image—is what separates a production-ready workflow from a casual one. It allows for a level of precision that was previously only possible in Photoshop, but at a fraction of the time. However, there is an expectation-reset needed here: inpainting is not a “one-click” fix for every error. Complex architectural details or intricate patterns often require multiple passes and a steady hand in prompt adjustments to achieve a seamless blend.

From Stills to Motion: The Cinematic Bridge

The final frontier of the modular pipeline is the transition from static images to video. In a traditional workflow, video is a separate silo. In an AI-driven pipeline, the “hero image” generated in the previous steps serves as the foundation for the video.

Transforming a static Nano Banana Pro output into motion requires an image-to-video model that respects the initial composition. This is where many workflows break down. Most video generators take too much creative liberty, changing the character’s face or the color of the clothing as soon as the pixels start moving.

To avoid this, creators must be selective about the “hero image.” An image with too much fine detail or chaotic background elements is harder for motion models to interpret. A clean, high-resolution master from Nano Banana Pro AI provides a much more stable reference point for cinematic video generators. By using the image as a “spatial anchor,” the AI has a clear map of what should stay still and what should move.

The Limits of the Loop: What AI Cannot Fix

Despite the rapid advancement of these tools, it is crucial to remain grounded about their limitations. A modular pipeline is a force multiplier, but it is not a replacement for creative judgment. There are several areas where the current tech still requires heavy human intervention.

The Nuance of Brand Alignment

AI models are trained on general aesthetics, not your specific brand guidelines. An AI might understand “luxury,” but it doesn’t understand your specific brand’s definition of luxury—which might mean “minimalist and cold” rather than “gold and ornate.” The creative director’s eye remains the most important filter in the pipeline. If the output doesn’t feel right, no amount of upscaling or motion will fix a fundamental lack of brand alignment.

The Challenge of Complex Text

While models like Nano Banana Pro have made massive strides in rendering text, they still struggle with long phrases or text integrated into complex, non-flat textures. If your asset requires precise typography on a curved surface or within a busy scene, the most practical solution is still to generate the visual asset and layer the text manually in a design tool. Relying on AI to get the spelling and the font perfectly right in a single pass is often a recipe for wasted credits.

The Future of Production is Systematic

The “wow factor” of AI generation is fading, replaced by the practical reality of daily production. Creators who continue to rely on the “prompt-and-hope” method will find themselves unable to compete with those who have built repeatable, modular pipelines.

By using Nano Banana Pro and its associated suite of tools, creators can move from being “prompt engineers” to being true creative directors. You aren’t just asking a machine to draw something; you are managing a pipeline that starts with a concept, refines a high-resolution master, and extends that visual identity into video and motion.

The goal is not to remove the human from the loop, but to give the human better controls. As these tools become more integrated, the friction between “idea” and “execution” will continue to shrink, provided we treat the AI as a modular component of a larger system rather than a magic wand. In the end, the most successful creators will be those who spend less time looking for the perfect prompt and more time building the perfect workflow.